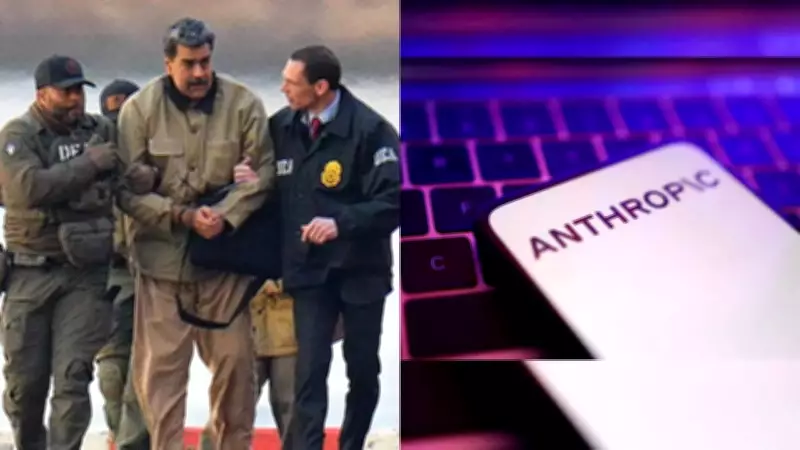

Claude AI Reportedly Deployed in US Military Operation to Capture Venezuelan Leader

The Wall Street Journal has revealed that Anthropic's artificial intelligence model Claude was utilized in the United States military operation that led to the capture of former Venezuelan President Nicolas Maduro. According to sources familiar with the matter, this deployment occurred through Anthropic's partnership with data analytics firm Palantir Technologies, whose platforms are extensively used by the US Defense Department and federal law enforcement agencies.

Details of the Operation and AI Involvement

The mission to apprehend Maduro and his wife involved bombing several strategic sites in Caracas last month. In an early January raid, US forces successfully captured Maduro and transported him to New York to face serious drug trafficking charges. While Reuters could not independently verify this report, and both the US Defense Department and White House declined immediate comment, the implications are significant for military AI applications.

Anthropic maintained its position of not commenting on operational specifics. "We cannot comment on whether Claude, or any other AI model, was used for any specific operation, classified or otherwise," stated an Anthropic spokesman. "Any use of Claude—whether in the private sector or across government—is required to comply with our Usage Policies, which govern how Claude can be deployed. We work closely with our partners to ensure compliance."

Ethical Questions and Policy Violations

This reported deployment raises substantial ethical concerns, particularly because Anthropic's usage policies explicitly prohibit Claude from being used to:

- Facilitate violence or warfare

- Develop weapons systems

- Conduct surveillance operations

The involvement of Claude in a raid featuring bombing operations highlights growing tensions between AI developers' ethical guidelines and military applications. The Wall Street Journal previously reported that Anthropic's concerns about Pentagon usage have led administration officials to consider canceling a contract potentially worth up to $200 million.

Military AI Landscape and Strategic Shifts

According to informed sources, Anthropic represents the first AI model developer to be utilized in classified operations by the Department of Defense. While it remains unclear whether other AI tools were employed in the Venezuela operation for unclassified tasks, the Pentagon has been actively pushing leading AI companies—including OpenAI and Anthropic—to make their tools available on classified networks with fewer restrictions than those applied to commercial users.

Most AI companies building custom systems for the US military currently operate only on unclassified networks for administrative functions. However, Anthropic stands as the only major AI developer whose system is accessible in classified settings through third-party partnerships, though government users remain technically bound by its usage policies.

Broader Implications for AI Governance

At a January event announcing Pentagon collaboration with xAI, Defense Secretary Pete Hegseth made revealing comments about military AI requirements. "The agency would not 'employ AI models that won't allow you to fight wars,'" Hegseth stated, referring to ongoing discussions administration officials have conducted with Anthropic.

This evolving relationship between AI developers and defense agencies reflects a significant strategic shift as artificial intelligence tools become increasingly integrated into military operations. These systems are now deployed for diverse tasks including:

- Document analysis and intelligence summarization

- Support for autonomous systems and decision-making

- Operational planning and execution support

The reported use of Claude in the Maduro raid underscores the expanding role of commercial AI models in US military operations, even as intense debates continue regarding ethical boundaries, usage policy enforcement, and regulatory oversight frameworks.

Understanding Anthropic's Claude AI System

Anthropic's Claude represents an advanced artificial intelligence chatbot and large language model specifically designed for:

- Sophisticated text generation and reasoning capabilities

- Coding assistance and programming task support

- Comprehensive data analysis and report generation

- Document summarization and complex query resolution

As part of a competitive family of large language models challenging systems like OpenAI's ChatGPT and Google's Gemini, Claude has been positioned by Anthropic as a safety-focused AI system. Founded in 2021 by former OpenAI executives including CEO Dario Amodei, Anthropic has rapidly emerged as a leading global AI startup, recently raising $30 billion in funding that values the company at approximately $380 billion.

Amodei has consistently advocated for stronger regulation and protective guardrails to mitigate risks from advanced AI systems, creating an apparent contradiction between the company's public safety stance and its reported military applications.