The Rise of Deepfakes: AI's Threat to Reality and Trust

In an era where artificial intelligence is reshaping our world, deepfakes have emerged as a formidable challenge, blurring the lines between reality and fabrication. These AI-generated videos and images are becoming increasingly indistinguishable from authentic media, fundamentally eroding the trust we place in visual and auditory content. As technology advances, the implications extend far beyond mere novelty, threatening the very foundations of truth in digital communication.

Accessible Creation Tools Fuel the Deepfake Epidemic

The barrier to creating convincing deepfakes has collapsed dramatically. Open-source tools now empower individuals with basic technical skills to produce high-quality synthetic media in a matter of hours. By scraping content from social media platforms, these tools enable the rapid generation of fake videos and images, making deepfake technology accessible to a wide audience. This democratization of creation tools has accelerated the spread of deceptive content, raising alarms across sectors.

Widespread Risks and Societal Threats

Deepfakes pose multifaceted risks that span individual, organizational, and societal levels. From reputational damage and personal fraud to more insidious threats like 'deepfake job applicants,' the potential for harm is vast. Organizations are increasingly vulnerable to synthetic impersonation in hiring processes, where AI-generated candidates can deceive recruiters. At a broader scale, deepfakes can undermine public trust, manipulate elections, and fuel misinformation campaigns, creating a climate of uncertainty and doubt.

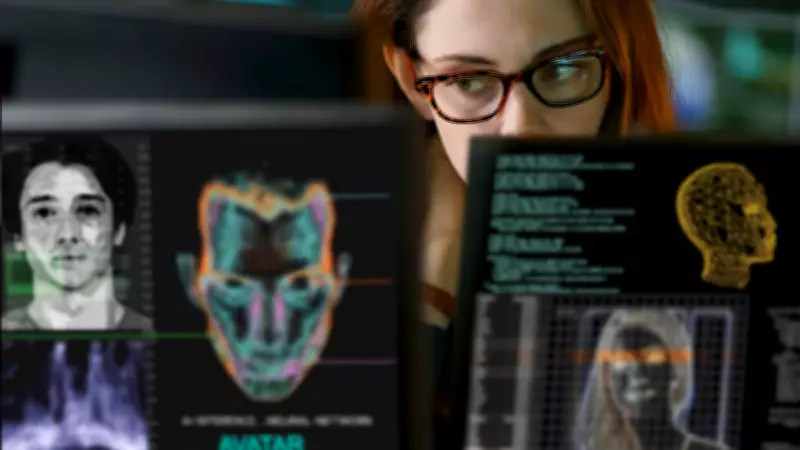

The Daunting Challenge of Detection

As deepfake technology evolves, detection has become an uphill battle. Even experts struggle to identify high-quality fakes, as subtle inconsistencies—once telltale signs of manipulation—are disappearing. Advanced algorithms now produce deepfakes with near-perfect realism, making it difficult to distinguish between genuine and synthetic media. This detection gap highlights the urgent need for innovative solutions and robust verification frameworks to combat the spread of deceptive content.

Regulatory and Cultural Shifts in Response

Governments and digital platforms are beginning to take action against the deepfake threat. In India, for instance, the Ministry of Electronics and Information Technology (MeitY) issued an advisory in 2023 to address the risks posed by AI-generated media. However, regulatory efforts are still in their infancy, and a more comprehensive approach is required. Experts advocate for a zero-trust mindset, where digital authentication and verification become standard practices. Long-term solutions will depend on a combination of technological innovation, policy frameworks, and cultural shifts toward greater media literacy.

Key Statistics and Future Outlook

- MeitY Advisory in India (2023): A regulatory step highlighting the growing concern over deepfakes in one of the world's largest digital markets.

- Open-Source Tools: Enable creation with minimal effort, drastically lowering the barrier to entry for malicious actors.

- Organizational Preparedness: Many organizations remain unprepared for synthetic impersonation, particularly in hiring scenarios.

- Deepfake Job Applicant Risk: A rising threat that underscores the need for enhanced verification processes in recruitment.

The battle against deepfakes is just beginning. As AI continues to advance, the need for effective detection methods, stringent regulations, and public awareness has never been more critical. Without proactive measures, the erosion of trust in digital media could have lasting consequences for society.