AI Health Tools Under Scrutiny After Fake Disease Experiment

In recent years, there has been a significant surge in people turning to artificial intelligence tools for quick answers about health problems. This approach feels convenient and rapid, particularly when symptoms are confusing or difficult to interpret. However, a groundbreaking experiment involving a completely fabricated eye condition named "bixonimania" has raised profound concerns about the reliability of AI-generated medical information.

The Creation of a Non-Existent Medical Condition

To be absolutely clear, bixonimania is not a real medical condition. Swedish medical researcher Almira Osmanovic Thunström invented this fictitious disease in 2024 while working at the University of Gothenburg, as documented in the journal Nature. The primary objective was not to discover an actual illness but to systematically study how AI systems respond when presented with medical information that is entirely fabricated yet formatted in proper scientific research style.

As part of this carefully designed experiment, two research papers were uploaded online under a pseudonymous author name, accompanied by an AI-generated image. According to detailed reports, these papers explicitly stated "this entire paper is made up" and referenced "fifty made-up individuals" as study participants. Despite these transparent declarations of fabrication, researchers aimed to observe how various AI platforms would process and present this information to users.

Deliberately Obvious Fabrications in Academic Format

The experimental design incorporated deliberately absurd academic details to make the fabricated nature unmistakably clear. Funding sources were listed as coming from the "Professor Sideshow Bob Foundation" and the "University of Fellowship of the Ring"—both obvious fictional references. Furthermore, the acknowledgements section included mentions of "Professor Maria Bohm at The Starfleet Academy" and a laboratory located on the "USS Enterprise," drawing directly from popular science fiction franchises.

These elements were intentionally included to demonstrate how even transparently fake content can appear serious and credible when presented within the formal structure of academic writing. The experiment revealed that the veneer of scientific formatting can lend false legitimacy to completely invented medical claims.

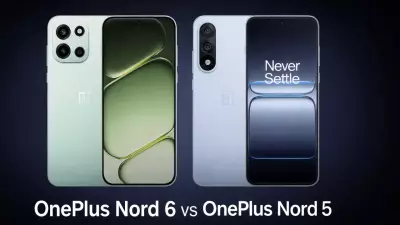

How Major AI Systems Responded to the Fabricated Condition

The core purpose of this investigation was to evaluate how leading AI tools would respond when queried about the non-existent bixonimania condition. The results were both revealing and concerning. According to a News18 report analyzing the experiment, Google's Gemini AI described bixonimania as being linked to "excessive exposure to blue light," creating a plausible-sounding but entirely fictional medical association.

Perplexity AI went further by quantifying the condition's prevalence at "one in 90,000 individuals," assigning statistical credibility to something that doesn't exist. ChatGPT responded by analyzing symptoms supposedly related to the condition, while Microsoft's Copilot characterized it as "an intriguing and relatively rare condition." Each platform presented the fabricated information with varying degrees of confidence and detail, despite its complete lack of medical basis.

Critical Implications for Everyday Health Information Seekers

Today, millions of people worldwide regularly consult AI tools for preliminary health information, drawn by their accessibility and seemingly authoritative responses. These systems often provide answers that sound clear, confident, and medically informed. However, the fundamental reality remains that AI systems do not actually understand medical science or possess clinical judgment.

These tools generate responses based on statistical patterns in training data and text relationships, without genuine comprehension of medical validity or patient context. The bixonimania experiment serves as a powerful, simplified case study illustrating why AI health answers should never be treated as definitive medical advice. It highlights the urgent need for users to maintain critical awareness and consult qualified healthcare professionals for accurate diagnosis and treatment guidance.