The Unseen Architect of Our Digital World

Most students today learn coding, artificial intelligence, or basic communication tools without realizing there was once a world where none of this had a formal mathematical foundation. Before Wi-Fi, before smartphones, before even digital computers as we know them existed, there was no clear way to measure "information" itself. Then, in 1948, a 32-year-old researcher at Bell Laboratories fundamentally changed everything with a single paper titled "A Mathematical Theory of Communication."

The Paper That Changed Everything

Shannon's groundbreaking 1948 paper was initially met with skepticism from both engineering and mathematical communities. Engineers found it too abstract for practical application, while mathematicians considered it too applied for pure theory. One reviewer even dismissed it outright. Today, that same paper is universally recognized as the birth certificate of the digital age. The man behind this revolutionary work was Claude Shannon, now celebrated as the Father of Information Theory.

The 21-Year-Old Genius Who Built Modern Computing

Long before his famous 1948 paper, Shannon had already made history without fully realizing the magnitude of his contribution. At just 21 years old while studying at MIT, he worked with early mechanical systems that used electrical switches existing in only two states: on or off. Around the same time, Shannon had taken a philosophy course on Boolean algebra, where logic is reduced to true and false statements.

His 1937 master's thesis, A Symbolic Analysis of Relay and Switching Circuits, proved something truly revolutionary: Boolean logic could be physically constructed using electrical circuits. This meant logical reasoning could become hardware. This fundamental insight is why every modern computer—from laptops to smartphones to supercomputers—operates the way it does today. Scholar Howard Gardner later called it "the most important master's thesis of the century."

From Secret Codes to Perfect Secrecy

During World War II, Shannon worked in cryptography at Bell Labs, helping develop secure communication systems including technologies used in classified voice transmission between world leaders. His work in cryptography extended far beyond practical wartime needs. In a classified memo that was later declassified, Shannon mathematically proved something extraordinary: perfect secrecy is actually achievable.

This groundbreaking result became the foundation of modern cryptography, influencing everything from the Data Encryption Standard (DES) to today's Advanced Encryption Standard (AES). Essentially, it marked the paradigm shift from "breaking codes through skill and intuition" to "designing systems that are mathematically secure."

The Birth of Information Theory

Shannon's 1948 paper didn't merely describe communication—it fundamentally defined it. He introduced a way to measure uncertainty using a formula now known as Shannon entropy: H = −Σ p(x) log p(x). While the equation might appear intimidating, the underlying concept is elegantly simple: it measures how unpredictable information actually is.

From this foundational work emerged several powerful concepts that shaped our technological world:

- The bit: The smallest unit of information (a 0 or 1), later named by statistician John Tukey

- Channel capacity: The recognition that every communication system has a maximum speed limit for reliable transmission

- A unified theory of communication that applies equally to telephones, radios, and computers

Engineer Robert Lucky once called Shannon's work "one of the greatest achievements in technological history." Even today, Shannon's ideas permeate artificial intelligence systems. Cross-entropy loss functions, information gain in decision trees, and perplexity measurements in language models all trace their origins back to his original equation.

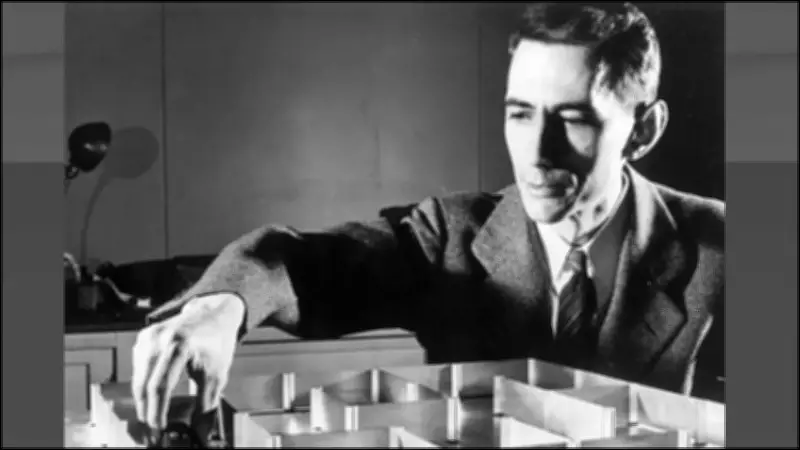

When Machines Started to Learn: Theseus the Mouse

Shannon wasn't merely a theoretical thinker—he enjoyed building practical devices that demonstrated his ideas. In 1950, he created a mechanical learning device called Theseus at MIT. This was a small mouse that navigated a maze using trial-and-error methods. Once it learned a path, it could remember it and solve the maze faster on subsequent attempts. If the maze configuration changed, the device would adapt accordingly.

This is widely considered one of the earliest demonstrations of machine learning in action. Shannon also wrote early ideas about programming computers to play chess and helped organize the famous Dartmouth Workshop, which is often cited as the official starting point of artificial intelligence as a distinct field of study.

The Playful Genius Behind the Theories

Shannon wasn't just a serious academic—he possessed a famously playful side that balanced his intellectual rigor. At Bell Labs, he was known for riding a unicycle through hallways while juggling. He built various gadgets including a flame-throwing trumpet and even a rocket-powered Frisbee. He called his home "Entropy House," a playful nod to his favorite scientific concept.

Despite his monumental contributions, Shannon often stated that his primary motivation was simple curiosity rather than fame or financial gain. He once explained that he simply wanted to understand how things worked at their most fundamental level.

The Legacy Inside Every Screen You Touch

Shannon's influence didn't remain confined to academic textbooks—it became the essential backbone of our digital world. From internet data transmission protocols to mobile network architectures, from encryption standards to artificial intelligence systems, his ideas quietly power nearly every technological tool students use today.

Modern researchers like Rodney Brooks have asserted that Shannon contributed more to 21st-century technology than anyone else from the 20th century. He spent his later years continuing research at MIT until 1978, before passing away in 2001 after living with Alzheimer's disease—a particularly poignant irony for someone who fundamentally defined how information itself is measured and understood.

Why Students Should Care About Shannon's Legacy

Claude Shannon's story transcends mere mathematics or engineering history. It demonstrates how a single idea—when deeply understood and properly developed—can reshape the entire world. He didn't just invent theories; he gave humanity the essential language to describe information itself. Every time you send a text message, stream a video, or train an artificial intelligence model, you're quietly utilizing the foundational concepts Claude Shannon introduced to the world.