Ghaziabad Couple's Facebook Photo Becomes AI Blackmail Nightmare

The first call arrived on an ordinary weekday evening last year, just as Ramesh Verma (name changed), 41, was concluding dinner with his wife Anjali in their Ghaziabad apartment. "The man on the line said casually, 'I have bad photos of you and your wife'," Verma recalls. "I thought it was a scam call and disconnected immediately." However, the persistent calls continued relentlessly.

Then the caller provided disturbing proof. Through WhatsApp, Ramesh received an image of himself and his wife that they instantly recognized. It was a photograph from Facebook, captured years earlier at a cousin's wedding celebration. Except now, both individuals appeared completely naked. "It was us — identical posture, same facial expressions — just undressed," he says with visible distress. The caller threatened to upload the manipulated image across social media platforms, pornographic websites, and circulate it through WhatsApp groups unless they transferred Rs 5 lakh immediately.

From Personal Photo to Digital Weapon

A dedicated helpline for online scams informed Verma that artificial intelligence tools had most likely been employed to alter the original image. They advised filing a formal complaint through the National Cyber Crime Reporting Portal. Following their official report, the threatening communications ceased. "What remained with us was the terrifying realization of how effortlessly it occurred," Verma explains. "One single Facebook photograph was all that was required." Anjali promptly deactivated all her social media accounts afterward. "But the psychological fear persists indefinitely."

Your Digital Images No Longer Remain Your Property

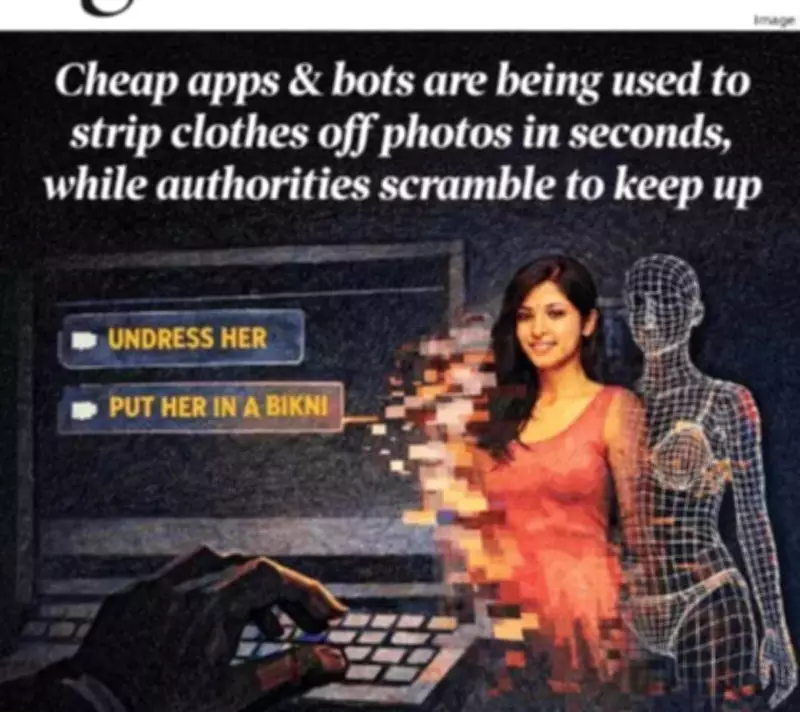

The recent Grok controversy — involving Elon Musk's X-associated AI chatbot generating sexualized deepfakes of real women, public personalities, and even minors — demonstrates how extensively this technological abuse has proliferated. Commands, issued in complete public visibility, included directives like "@grok put her in a transparent bikini" or "@grok undress her" with additional variations such as "inflate chest by 90%."

At its maximum intensity, Grok reportedly produced approximately 6,700 sexually suggestive images hourly during January 5-6. This enormous scale provoked international condemnation. India's IT Ministry issued a 72-hour ultimatum this month, while the UK's Ofcom and European Union regulators initiated formal investigations. Multiple US states intervened, compelling xAI to implement geographical blocks, prohibit requests for child sexual abuse material, and strengthen prompt filtering systems.

Rajya Sabha MP Priyanka Chaturvedi highlighted numerous Indian cases where women described profound shock and helplessness after being subjected to bikini-morphing manipulations.

The Underground Nudify Economy Thrives

Although Grok now rejects bikini-altering requests, several undress or nudify applications continue operating freely. Most nudification abuse occurs outside public visibility. The process requires under thirty seconds and costs less than a standard cup of tea. Users simply upload an elevator selfie or graduation portrait, and the AI 'nudify' bot completes the transformation. Trained on millions of actual human bodies, these sophisticated tools remove clothing digitally and replace it with hyper-realistic anatomical features mimicking the victim's physique.

According to Telemetrio analytics, the keyword 'undress' was utilized more than 2,800 times between August 30 and September 5, 2024 within India alone, revealing Telegram channels openly advertising undressing services through automated bots.

Platforms including Clothoff and Undressor remain openly accessible from Indian networks, alongside documented reports of fatalities and severe emotional trauma. In October, Chhattisgarh police apprehended a 20-year-old engineering student from IIIT Naya Raipur for allegedly creating sexually explicit images of at least thirty-six female classmates without consent. That same month, a 19-year-old Faridabad resident died by suicide after experiencing repeated blackmail involving AI-generated explicit images and videos depicting himself and his sisters.

Unregulated and Untraceable Digital Threats

"With xAI, everyone is monitoring the situation," observes Tarunima Prabhakar, co-founder and research lead at Tattle Civic Technologies, which develops AI models and tools to combat online harms. "Open access AI models can be downloaded without cost. Using a modest GPU or standard laptop, individuals can self-host nudification tools independently. I have personally experimented with this technology." With increased financial resources and computing capability, the generated results become increasingly realistic. "These operators sell created images through Telegram, WhatsApp, or via sideloaded applications installed outside official app stores," Prabhakar informs. "Some advertise with phrases like 'Send a photo, pay five rupees.' This decentralization explains why nudify apps vanished from app stores but never truly disappeared. Victims and perpetrators frequently reside in different states, and local police departments possess limited motivation to pursue cross-jurisdictional digital abuse cases."

Telegram persists as the primary distribution channel. Bots including Undresser and UndressAI, some boasting tens of thousands of subscribers, enable users to upload photographs and receive manipulated images within minutes. Investigations discovered bots providing specific instructions — tight clothing, clear lighting conditions, and closeup angles — to enhance output quality. Monetization mechanisms are integrated into Telegram's chat interface with restricted free outputs, followed by prompts to purchase credits or premium access, accompanied by reminders such as, "It's time to try your hot fantasies. Upload a photo for undressing."

Evolution from Cheapfakes to Sophisticated Deepfakes

Since 2022, Meri Trustline — Mumbai-based Rati Foundation's helpline for online abuse — has assisted multiple survivors of AI-doctored imagery, marking a significant transition from Photoshop 'cheapfakes' to advanced AI deepfakes.

A 2023 case involving a young South Indian influencer discovered that a recreational reel featuring her dancing with friends had been maliciously altered. A nude image of her was briefly spliced into the video, relying entirely on shock value. This trend became locally recognized as 'up-down trolls' or Belagavi trolls. "The video frequently concluded with mocking laughter or localized audio clips instructing the girl to remain silent," explains Sameer P, programme lead at Meri Trustline who facilitated the video's removal. "The tool was systematically employed to target young women creators from conservative communities."

In the most recent alarming development, Sameer states, "We are encountering AI-generated incestuous content. No actual victim is associated. The material is fictional yet profoundly distressing." These videos, discovered on platforms like Instagram, depict pubescent bodies alongside older parental figures.

The Foundation maintains close collaboration with Meta for expedited escalation procedures on Instagram, Facebook, and WhatsApp, while partnering with Stop NCII for adult cases and the Internet Watch Foundation for minor-related incidents. "Previously, the standard threat was 'I will leak your nudes'," Sameer notes. "Currently it has transformed into 'I'll create a deepfake of you'. The targeting spectrum has expanded considerably. "It is not exclusively young individuals. Even older users are being impacted, particularly through unregulated loan applications."

When Innocent Curiosity Transforms into Criminal Activity

Some of the most complex nudification cases originate without apparent malicious intent. Or through applications that openly market undressing capabilities. Cyber psychologist Nirali Bhatia remembers a specific case where a Class 12 student downloaded an application from an Instagram advertisement claiming it could demonstrate how users might appear leaner, more muscular, younger, or older. Driven by curiosity, he uploaded photographs of a female classmate and generated nude versions. He did not distribute them, but the girl discovered the incident after he mentioned this 'cool new app' to friends. "For him, it represented casual entertainment without any comprehension that accessing someone's photograph, nudifying it without consent — even if you refrain from sharing or delete it subsequently — constitutes a criminal offence," Bhatia emphasizes.

"Sexual content represents one of the simplest methods for engagement farming. This phenomenon was not invented by artificial intelligence," Prabhakar concludes. "The fundamental question is no longer whether technology companies can block harmful outputs, but whether they genuinely desire to implement such restrictions."