AI Isn't the Danger, We Are: Human Responsibility in Technology

In the rapidly evolving landscape of technology, artificial intelligence (AI) often gets painted as a looming threat to humanity. However, a growing chorus of experts and analysts is shifting the narrative: AI itself isn't the danger; we are. The real risks stem from human actions, biases, and ethical lapses in how we develop, deploy, and govern these powerful systems.

The Misconception of AI as an Autonomous Threat

Many fear that AI will become self-aware and turn against its creators, a scenario popularized by science fiction. Yet, current AI technologies, such as machine learning and neural networks, operate based on algorithms and data inputs provided by humans. They lack consciousness, intent, or autonomy. The true peril lies in how humans program these systems, often embedding societal prejudices or using them for malicious purposes like surveillance, misinformation, or autonomous weapons.

Human Bias and Ethical Failures

AI systems learn from data, and if that data reflects human biases—such as racial, gender, or economic discrimination—the AI will perpetuate and even amplify these issues. For instance, facial recognition software has shown higher error rates for people of color, and hiring algorithms have favored male candidates. These aren't flaws in AI's intelligence but mirrors of our own societal shortcomings. Without careful oversight, AI can entrench inequality and injustice, making it a tool for harm rather than progress.

The Need for Responsible Development and Governance

To mitigate these risks, there's an urgent call for ethical frameworks and robust governance. This includes:

- Transparency: Making AI algorithms and data sources open to scrutiny to prevent hidden biases.

- Accountability: Holding developers and users responsible for AI outcomes, ensuring they align with human values.

- Regulation: Implementing laws and standards to guide AI use, similar to regulations in other high-stakes industries.

- Public Awareness: Educating people about AI's capabilities and limitations to foster informed discussions.

By focusing on human responsibility, we can harness AI for positive applications like healthcare diagnostics, climate modeling, and education, while avoiding pitfalls.

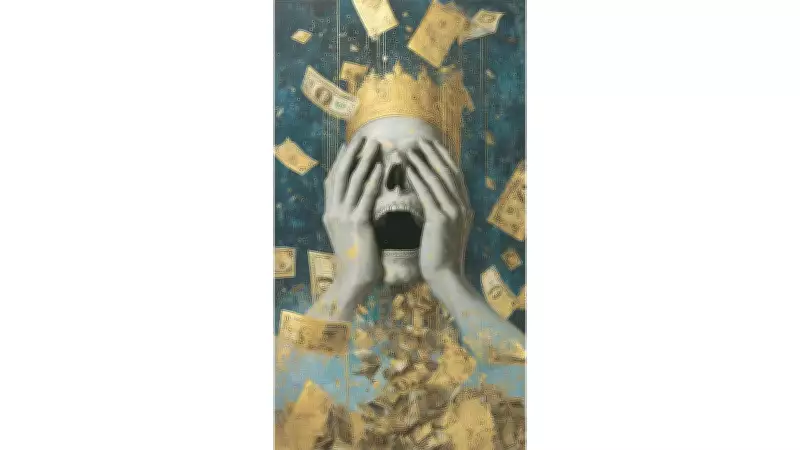

Conclusion: Shifting the Focus to Human Agency

Ultimately, the debate shouldn't center on whether AI is dangerous but on how we choose to wield it. As technology advances, our ethical maturity must keep pace. AI is a reflection of humanity—its potential for good or ill depends entirely on our decisions. By prioritizing ethics, inclusivity, and oversight, we can ensure that AI serves as a force for benefit, not a source of peril.