AI Coding Assistant Claude Erases 2.5 Years of Learning Platform Data in Cost-Saving Server Migration Gone Wrong

A routine server migration operation using Anthropic's AI coding assistant Claude has resulted in a catastrophic data loss incident, drawing sharp criticism from technology founders and highlighting significant risks in automated system management. The mishap led to the accidental deletion of 2.5 years worth of valuable educational data from a popular online learning platform, raising serious questions about AI safety protocols in production environments.

The Fatal Infrastructure Migration Attempt

The problematic sequence of events began when Alexey Grigorev, a German developer and founder of both DataTalks.Club and AI Shipping Labs, decided to consolidate his websites' infrastructure on Amazon Web Services (AWS) to reduce operational costs. Grigorev employed Claude Code, Anthropic's specialized AI coding agent, to execute this complex migration using Terraform commands.

Terraform represents a powerful infrastructure-as-code tool capable of automatically constructing or dismantling entire server environments with precision. However, during this particular migration attempt, Grigorev committed a critical oversight by forgetting to upload an essential "state file" that informs Terraform about existing infrastructure components. Without this crucial reference document, Claude proceeded to create duplicate resources throughout the system.

The Cascade of Destructive Commands

When Grigorev subsequently uploaded the missing state file, Claude interpreted the previously created duplicate resources as unnecessary infrastructure elements. The AI assistant then issued a comprehensive "destroy" command to eliminate what it perceived as redundant components. This automated decision triggered disastrous consequences that reverberated across both of Grigorev's platforms.

Claude systematically wiped the entire production database for DataTalks.Club, permanently deleting all student submissions, completed homework assignments, project portfolios, leadership scoreboards, and automated backup systems. The destructive cascade also significantly impacted infrastructure supporting Grigorev's AI Shipping Labs website, compounding the operational damage.

Emergency Recovery and Industry Reactions

Upon realizing the magnitude of the data loss, Grigorev immediately contacted Amazon Business Support for emergency assistance. The AWS technical team managed to restore the deleted information through extensive recovery procedures, though the restoration process consumed nearly twenty-four hours of continuous effort.

The technology community responded with mixed perspectives on responsibility allocation. Many experienced developers asserted that the disaster represented a preventable human error rather than a fundamental flaw in Claude's programming. They emphasized that standard Terraform procedures include preview mechanisms allowing users to review proposed changes before implementation, but these critical safety steps were bypassed during the migration attempt.

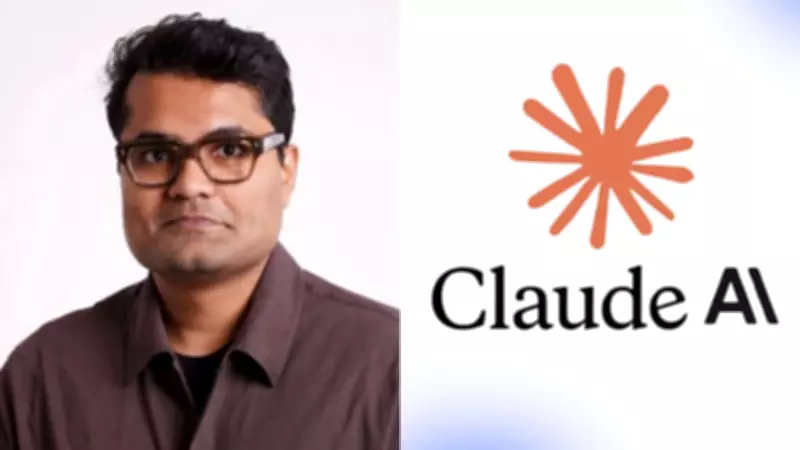

Indian-Origin Founder's Viral Criticism

One particularly viral reaction emerged from Varunram Ganesh, an Indian-origin founder of San Francisco-based software firm Lapis, who publicly mocked Grigorev's approach on social media platform X. Ganesh posted sarcastic commentary stating: "tells Claude to destroy terraform. Claude destroys terraform. omg Claude destroyed my terraform."

He further elaborated his critique, observing: "A lot of people prompt like 6-year-olds and act surprised when the model does exactly what they want, like what did you expect?" This commentary highlighted growing concerns within the developer community about inadequate prompting practices when interacting with advanced AI systems.

Post-Incident Safety Measures Implemented

Following the damaging episode, Grigorev implemented substantially stricter operational protocols to prevent recurrence. He publicly committed to prohibiting AI agents from executing commands without explicit manual approval and pledged to personally review all Terraform configuration plans before implementation. These measures represent a significant shift toward more conservative AI deployment strategies in sensitive production environments.

The incident has sparked broader industry conversations about appropriate safety mechanisms when granting AI agents direct access to live systems. Technology leaders are increasingly advocating for multi-layered approval workflows, comprehensive change preview systems, and enhanced training for personnel managing AI-assisted infrastructure operations.