India's Strategic Shift Towards Sovereign AI for Enhanced National Security

When India conducts satellite image analysis, secures its borders and coastlines, formulates military strategies, maps national assets, monitors power grids, detects cyberattacks, and fortifies UPI payment gateways, these massive technological operations rely heavily on artificial intelligence (AI). AI is no longer an optional tool but a critical component that keeps the wheels of daily life in motion within server infrastructures.

With the tightening embrace of technology and geopolitics, AI models that interpret sensitive information, identify anomalies, manage and analyze data, and assist in decision-making are increasingly controlling the synapses of India's strategic nervous system.

The Global AI Landscape and India's Position

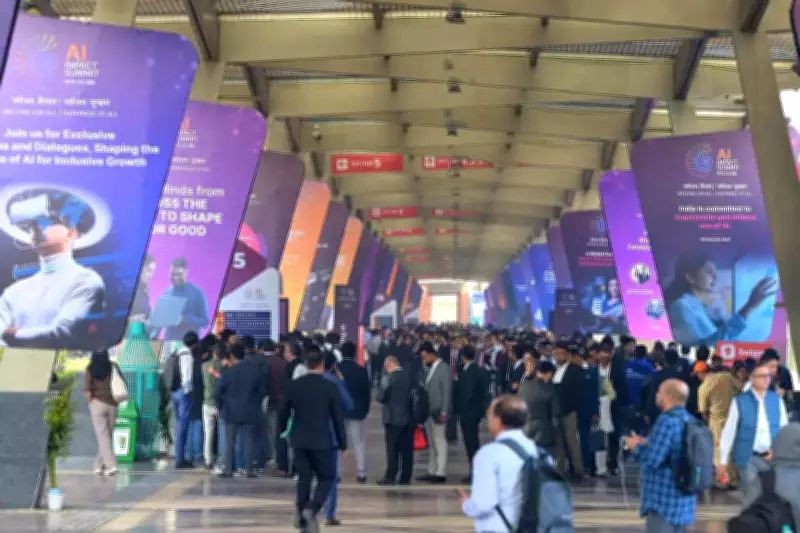

As the world's second-largest AI market, India is celebrated by the global tech community, as evidenced by the turnout at the ongoing AI Impact Summit in Delhi. While this presents a significant economic opportunity for an aspiring superpower, the government faces a foundational question in adopting AI into administrative and security frameworks: how much can India rely on foreign AI models?

At the core of this inquiry lies data security. Whether for governance or national security, governments utilize AI for deep analytics, requiring vast amounts of data—public, sensitive, and strategic for military use—to be fed into AI models. Much of the foundational AI infrastructure globally, including advanced chips, large language models, and hyperscale cloud systems, is concentrated in the United States and China, giving these nations disproportionate influence in the AI universe.

The Urgent Need for Sovereign AI

In India, there is a growing realization of the necessity for sovereign AI to safeguard national security. Without it, India's use of AI for security, governance, and other strategic purposes will remain cautious and suboptimal. Additionally, India requires AI that is built, hosted, and computed domestically to preserve the "strategic autonomy" of its foreign policy.

In a recent report titled 'India's AI Gambit,' the Mumbai-based think tank Strategic Foresight Group (SFG) calls for an urgent government decision on whether India can regulate or control the import of AI models that may risk national security. The report also suggests exploring a mandatory national security assessment framework for models deployed from abroad.

Threats and Military Perspectives

Sundeep Waslekar of SFG highlights that AI introduces more asymmetric threats to national security. He advocates for the National Security Council to scrutinize every foreign model deployed in India.

Military veteran Lieutenant General Satish Dua (retired), a counter-terrorism specialist instrumental in the 2016 Uri surgical strike, emphasizes AI's utility in threat assessment and strategic decision-making. AI tools can analyze data from multiple sensors for weather-based artillery planning and predicting adversary behavior. However, he cautions against trusting foreign models with sensitive data.

In a January paper titled 'Beyond the Kinetic: Deconstructing warfare in the socio-technical cognitive battlespace (STCB),' retired Lieutenant Colonel Pavithran Rajan and former head of Northern Command Lieutenant General D S Hooda (retired) argue that modern battlefields are increasingly shaped by technology and information manipulation. This was evident during Operation Sindoor last year and subsequent military confrontations with Pakistan, leading India to establish its first integrated battle group, Rudra, tailored for modern warfare.

The paper states that AI is revolutionizing STCB, including the weaponization of AI-generated content like deepfakes for disinformation campaigns, the use of machine learning for micro-targeting populations, and the deployment of AI in autonomous weapons systems.

Guarded Adoption and Vulnerabilities

Advocating for sovereign AI models, Lieutenant Colonel Rajan notes that AI will increasingly determine how states govern, secure themselves, and project power. Sovereignty now resides in control over data, algorithms, and cognitive infrastructures, not just territory.

Former diplomat and convener of the Centre for Research on Strategic and Security Issues (NatStrat), Pankaj Saran, points out that AI's own vulnerabilities pose risks. Sovereign control over AI models allows a country to effectively manage these threats, as AI can be used by attackers to automate and scale attacks, making defense more challenging. He cites initiatives like NATGRID's AI-enabled Organised Crime Network Database, which requires strict governance on security, access control, and auditing.

Consequently, AI adoption across ministries remains cautious. A source in the Ministry of Power notes that AI tools can help forecast generation capacity, particularly in renewable energy, but vulnerabilities must be studied. Old systems may need replacement, and without addressing weak points, a single cyberattack could disable the entire grid. Major ministries like defense, finance, education, and health are similarly wary of deploying AI in core systems without robust safeguards.

Building Indian AI Infrastructure

Ministry of Electronics and Information Technology (MeitY) officials highlight that a key deliverable under the IndiaAI Mission is to build and support Indian foundational models. Additional Secretary Abhishek Singh acknowledges that dependence on foreign models carries strategic risks, such as data being sent to other geographies and potential denial of access. At the AI Impact Summit, sovereign AI models will be showcased.

The government has shortlisted 12 startups to build sovereign AI models, including Sarvam AI, Bharat Gen, Gyan AI, Shodh AI, Intellihealth, Genloop, Socket, Fractal, and Tech Mahindra. Frontrunners include Bengaluru-based Sarvam AI, IIT-Bombay-incubated BharatGen, and Mumbai-based Fractal. Sarvam AI has released models like Sarvam Vision and Bulbul V3, which the company claims outperform global competitors on certain benchmarks and are trained on 22 Indian language datasets. Fractal has developed a healthcare model, Vaidya, while Bharat Gen is building Bharat Sagar for public policy use cases.

Singh describes five layers of the AI ecosystem: chips, data centers, servers, energy, and models and applications. To achieve self-reliance, India must manage all these layers independently. India is aggressively positioning itself as a data center hub to gain logistical influence over global AI architecture.

However, founders of some AI startups building sovereign models, speaking anonymously, report slow infrastructure support, including access to GPUs and funding. A Mumbai-based founder mentions receiving only a fraction of sanctioned funds under the AI mission, relying on their own resources.

Open-Source Solutions and Limitations

MeitY released India AI Governance Guidelines in November 2025, outlining recommendations on risk identification, inter-agency collaboration, and national security safeguards. A MeitY source explains that in government systems, open-source models are downloaded and hosted on domestic servers rather than accessed via foreign-hosted APIs. For example, in the National Informatics Centre (NIC), models are hosted locally to ensure data resides in India.

Open-source use has limitations. While it protects data, AI controls remain with the provider of the large language model, leading to dependency for upgrades and customizations. In areas of national importance, this results in critical time lags between identifying problems and implementing solutions.

Prakash Kumar, former IAS officer and CEO of the Wadhwani Centre for Digital Government Transformation, notes that large Indian companies using AI models have agreements with firms like OpenAI and Anthropic to prevent data leakage. He states that, to his knowledge, the government does not yet have such pacts with AI companies.

In the interim, Pankaj Saran suggests India can learn from the European Union's data protection regime. He emphasizes that India's Digital Personal Data Protection Act is a step forward but needs clear guidelines for consent, minimization, and data retention limits, stressing the importance of cyber awareness and resilience across institutions and citizens.