Meta Joins Tech Giants with Custom AI Chip Development

Facebook-parent company Meta has officially entered the competitive arena of custom artificial intelligence hardware, revealing a suite of four in-house AI chips designed to power its massive data center expansion. This strategic move places Meta alongside rivals such as Google, Microsoft, and Amazon, all of whom have developed specialized chips to reduce dependence on expensive and supply-constrained hardware from vendors like Nvidia and AMD.

Rapid Iteration and Inference-First Strategy

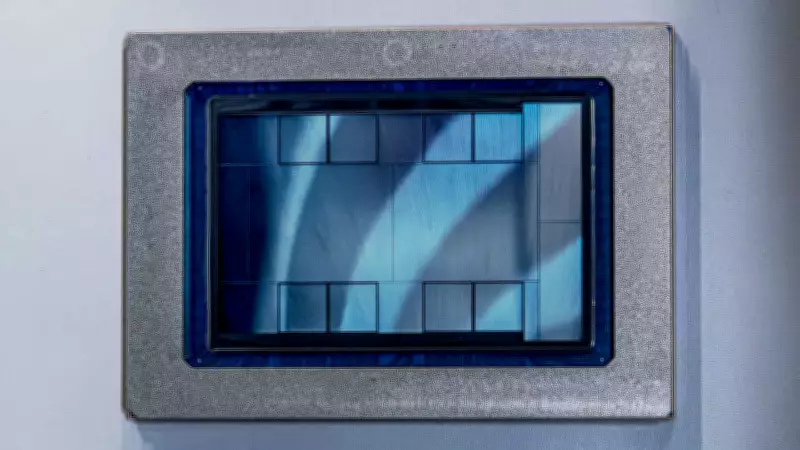

Meta is accelerating its chip development with plans to release new versions of its Meta Training and Inference Accelerator (MTIA) chips every six months. The company has articulated a competitive strategy that emphasizes rapid, iterative development, an inference-first focus, and seamless adoption through adherence to industry standards.

Why Meta will launch a new chip every six months: Meta explains that this accelerated pace allows it to adapt swiftly to evolving AI techniques and technologies. While the industry typically launches AI chips every one to two years, Meta's modular, reusable designs enable faster releases, minimizing costs and keeping pace with hardware advancements.

Mainstream chips are often built for the most demanding workloads, such as large-scale generative AI pre-training, and then applied less cost-effectively to other tasks like inference. Meta takes the opposite approach, optimizing MTIA 450 and 500 first for generative AI inference before supporting other workloads, including ranking and recommendations training and inference, as well as generative AI training.

Deployment and Capabilities of MTIA Chips

One of Meta's in-house chips, the MTIA 300, is already deployed and handles ranking and recommendation tasks, which determine the ads and posts users see on Facebook and Instagram. Meanwhile, the MTIA 400, 450, and 500 are designed specifically for generative AI applications, such as creating high-quality images and videos from text prompts.

Testing on the MTIA 400 model is complete, with the others expected to be operational by 2027. This deployment timeline underscores Meta's commitment to integrating custom hardware into its infrastructure efficiently.

Strategic Leverage and Supply Diversification

Despite recent multi-year deals to purchase millions of GPUs from Nvidia and AMD, Meta seeks greater leverage in its hardware strategy. Yee Jiun Song, Meta's Vice President of Engineering, told CNBC that custom chips enable the company to achieve better price-performance ratios across its fleet.

This provides us with more diversity in terms of silicon supply and insulates us from price changes to some extent, Song explained, highlighting the strategic importance of reducing reliance on external vendors amid fluctuating market conditions.

Meta's push into custom AI chips reflects a broader industry trend where tech giants are investing heavily in proprietary hardware to gain competitive advantages, control costs, and ensure supply chain resilience in the rapidly evolving AI landscape.