Nvidia's AI Roadmap Takes Center Stage at GTC Conference

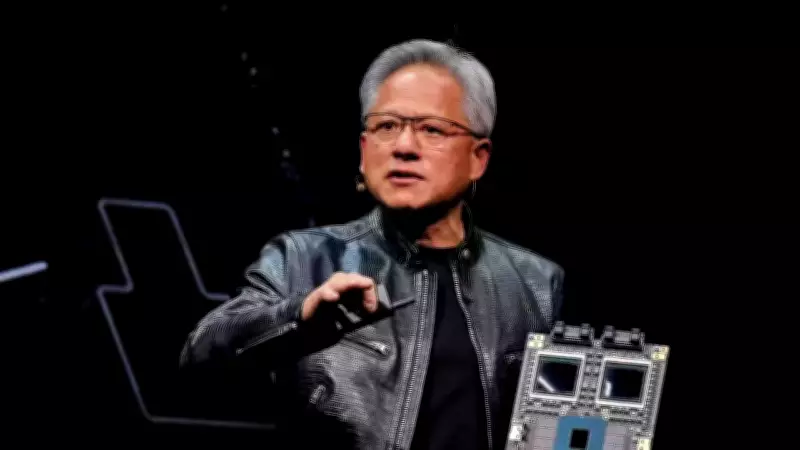

Nvidia CEO Jensen Huang is poised to detail the company's next strategic moves in artificial intelligence (AI) hardware, software, and international collaborations during the upcoming Nvidia GTC conference. This annual developer gathering has evolved into a pivotal platform where Nvidia outlines its AI trajectory, launches innovative chips, and addresses industry dynamics.

Anticipated Announcements and Market Context

The conference arrives shortly after Nvidia reported robust earnings that had a muted effect on its stock performance, sparking investor inquiries into the sustainability of current AI expenditure levels. Analysts and market watchers are anticipated to concentrate on several key themes throughout Huang's keynote and associated revelations.

Potential Launch of Inference-Focused AI Chip

One of the most closely monitored announcements from Nvidia involves a potential inference-focused AI chip. Inference pertains to executing trained AI models rather than developing them, a sector projected to expand as AI applications proliferate.

Huang previously hinted that Nvidia is preparing "several new chips the world has never seen before." A Wall Street Journal report earlier this year suggested Nvidia might introduce an inference chip integrating technology from AI startup Groq, with OpenAI likely as a customer. The chip's architecture could also shed light on Nvidia's approach to managing memory needs in inference tasks, which frequently depend on high bandwidth memory (HBM) amid supply limitations. Observers are keen to see if Nvidia will increase reliance on SRAM, a rapid on-chip memory common in inference systems.

Sid Sheth, founder and CEO of d-Matrix, informed Business Insider that the competitive environment differs from training chips. Sheth noted that while Nvidia maintains dominance in training workloads, "inference is a different ballgame." He added that CUDA, Nvidia's programming platform extensively used for AI model training, provides less edge in inference, as developers can opt for alternatives since running finalized AI models doesn't necessitate identical programming complexities.

Transition Beyond Rubin and Future Architectures

Another focal point at the GTC conference is Nvidia's shift to its next-generation AI infrastructure. The company has already unveiled its forthcoming Rubin Ultra systems, anticipated to demand substantially more power than prior platforms.

Sebastien Naji, a research analyst at William Blair, told BI that investors will monitor how Nvidia navigates this transition and whether cloud providers endorse the platform. Analysts are also curious about what succeeds Rubin, including a prospective architecture dubbed Feynman. A significant technological advancement expected in that generation is copackaged optics, utilizing light instead of electricity for data transfer between chips, potentially lowering power usage and enabling larger computing clusters.

Earlier this month, Nvidia disclosed multibillion-dollar supply deals with optical component makers Coherent Corp. and Lumentum Holdings, hinting that optical technologies might feature in future AI infrastructure.

Agentic AI and Robotics Developments

Investors are also assessing whether novel AI applications can uphold demand for computing infrastructure. Brian Mulberry, chief market strategist at Zacks Investment Management, conveyed to BI that emphasis has moved toward the endurance of AI demand rather than its growth rate.

Huang has often emphasized agentic AI as a future driver for inference demand, referring to software agents that autonomously perform tasks using AI models. Sheth remarked that agentic system development could broaden as technologies like voice interfaces, video processing, and multimodal AI progress, stating, "We haven't even started" regarding an impending inference surge.

Robotics may also integrate into Nvidia's long-term plan, according to Daniel Newman, CEO of The Futurum Group. Newman highlighted that Nvidia recorded approximately $6 billion in robotics-related revenue last quarter and has signaled intentions to accelerate humanoid robotics initiatives.

Geopolitical Influences on Nvidia's Strategy

Policy and geopolitical factors are expected to shape Nvidia's announcements. Export controls and international tensions have increasingly impacted the market for advanced AI chips.

Per a Financial Times report, Nvidia ceased production of its H200 chips for China, reallocating capacity to its next-generation Rubin platform. Concurrently, the US government is contemplating additional AI chip export restrictions, which could affect Nvidia's international sales.

Newman noted that markets beyond China remain crucial for Nvidia's growth, citing substantial AI infrastructure investments in nations like Saudi Arabia and the United Arab Emirates. However, Middle East conflicts have raised concerns about supply chains, energy expenses, and data center construction timelines.

As AI becomes more intertwined with national policy and economic rivalry, analysts assert that governmental decisions may influence Nvidia's global strategy alongside demand from tech firms and cloud providers.