Anthropic Announces Legal Challenge Against Pentagon's 'Supply Chain Risk' Designation

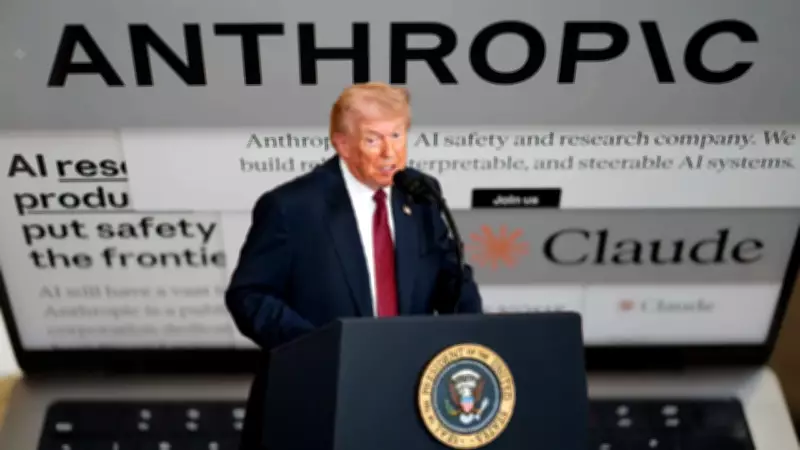

Artificial intelligence company Anthropic revealed on Friday that it intends to contest the Pentagon's decision to label it as a supply-chain risk through legal action, according to a Reuters report. This announcement comes shortly after US President Donald Trump issued a directive ordering all federal agencies to immediately halt the use of Anthropic's technology.

Trump's Directive and Sharp Criticism

President Trump mandated that every federal department cease using Anthropic's products without delay. Agencies currently reliant on the firm's tools have been granted a six-month transition period to phase them out. In a strongly worded post on Truth Social, Trump characterized the company's leadership as "Leftwing nut jobs," highlighting a growing divide between the White House and key AI providers to the Pentagon.

"THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!" Trump wrote. He asserted that decisions regarding US military operations "belong to YOUR COMMANDER-IN-CHIEF" and accused Anthropic of attempting to "STRONG-ARM the Department of War" into complying with its terms of service rather than the Constitution.

Trump further claimed that the company's "selfishness is putting AMERICAN LIVES at risk" and endangering national security. He instructed "EVERY Federal Agency" to discontinue using Anthropic technology and warned of "major civil and criminal consequences" for non-compliance. "We don't need it, we don't want it, and will not do business with them again!" he declared.

Escalating Tensions and Core Disagreements

The confrontation follows days of increasing friction between the administration and Anthropic. The company's chatbot Claude has been integrated into various US government operations, including classified environments. The central issue revolves around safeguards that Anthropic insists are crucial to prevent its AI from being utilized for mass surveillance of Americans or in fully autonomous weapon systems.

Chief Executive Dario Amodei has consistently stated that the company "cannot in good conscience accede" to Pentagon demands for expanded usage rights. Defense Secretary Pete Hegseth reportedly warned that continued refusal could result in contract termination and the designation of Anthropic as a "supply chain risk." On social media platform X, Hegseth stated, "effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

Silicon Valley's Divided Response

The standoff has sparked differing opinions across Silicon Valley. Elon Musk has publicly endorsed the administration's position, while Sam Altman expressed support for Anthropic's safety concerns, indicating that he largely trusts the company's intentions. This division underscores the broader debate within the tech industry regarding AI ethics and government collaboration.

The legal challenge by Anthropic marks a significant escalation in the ongoing dispute, setting the stage for a potentially landmark case at the intersection of artificial intelligence, national security, and corporate governance.