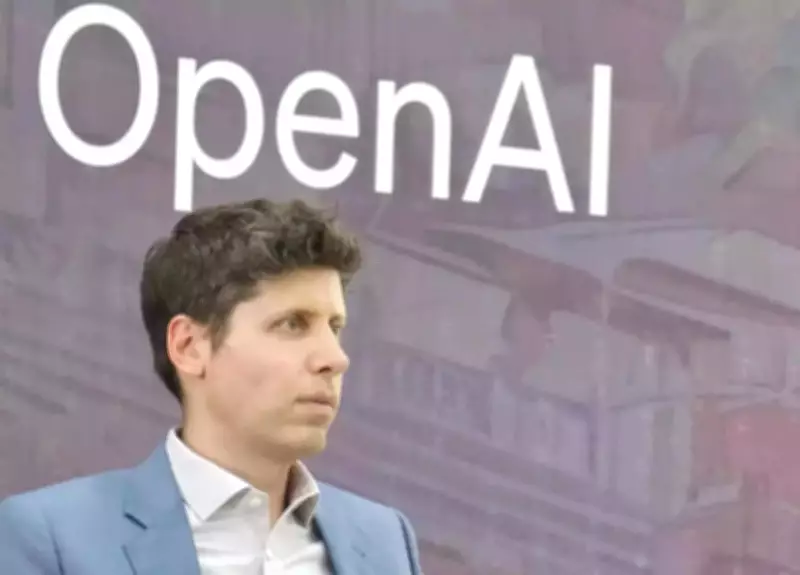

OpenAI CEO Expresses Gratitude for Nvidia's Computing Power Expansion

OpenAI CEO Sam Altman has publicly extended his appreciation to Nvidia CEO Jensen Huang following Huang's revelation about the aggressive efforts to significantly expand computing capacity for ChatGPT-maker OpenAI across multiple cloud platforms. In a post shared on the social media platform X, formerly known as Twitter, Altman wrote, "Very grateful to Jensen for working to expand Nvidia's capacity at AWS so much for us!"

Nvidia's Substantial Investment in OpenAI

Altman's remarks came shortly after Huang confided that Nvidia will invest a staggering $30 billion in OpenAI, describing it as one of the final opportunities to back a 'consequential company' before it goes public. Huang made these comments earlier this month at the Morgan Stanley Technology, Media & Telecom Conference, where he emphasized Nvidia's crucial role in scaling artificial intelligence infrastructure globally.

Multi-Cloud Expansion Strategy

CEO Jensen Huang also explained that Nvidia has been working 'like mad' to ramp up OpenAI's computing power not only on Microsoft Azure but also on Amazon Web Services (AWS) and Oracle Cloud Infrastructure. This strategic expansion is designed to ensure that OpenAI has the essential GPU resources required to support its rapidly growing and increasingly sophisticated AI systems.

- Nvidia is expanding infrastructure support across Microsoft Azure, AWS, and Oracle Cloud

- The goal is to provide OpenAI with necessary GPU resources for AI development

- This multi-cloud approach ensures redundancy and scalability

Broader AI Industry Support

Beyond OpenAI, Nvidia is also expanding infrastructure support for other leading artificial intelligence companies, including Anthropic and Meta Platforms. This comes as competition intensifies across the technology sector to secure the computing backbone for next-generation AI models that are becoming increasingly complex and resource-intensive.

New Processor Specifically for OpenAI

Nvidia is reportedly developing a new processor specifically designed for AI inference computing – a type of processing that allows AI models to respond to user queries – with OpenAI as the primary target. According to industry reports, the announcement is expected at Nvidia's GTC developer conference in San Jose next month, and ChatGPT-maker has already agreed to become one of its largest customers for this specialized hardware.

Strategic Shift in Business Approach

According to a report by The Wall Street Journal, this development marks one of the most significant shifts in Nvidia's business strategy since the beginning of the artificial intelligence boom. Nvidia has long dominated the market for GPUs – specialized chips used for training AI models – including its Hopper, Blackwell and Rubin GPU series. Most analysts estimate Nvidia controls over 90% of the GPU market for AI applications.

- Nvidia controls approximately 90% of the AI GPU market

- The company's GPU series include Hopper, Blackwell and Rubin architectures

- Traditional GPUs were designed primarily for training AI models

Focus on Inference Computing

The artificial intelligence industry is undergoing a fundamental shift from building models to actually running them at scale, which has highlighted limitations in traditional GPU architecture. The new processor being developed is specifically designed around inference computing rather than training, representing a strategic adaptation to market demands. Nvidia will incorporate technology from Groq, a chip startup it acquired in a roughly $20 billion deal late last year, to enhance this new product line.

This comprehensive support from Nvidia comes at a critical time as OpenAI continues to develop increasingly sophisticated AI systems that require unprecedented computing power. The collaboration between these two technology leaders represents a significant milestone in the evolution of artificial intelligence infrastructure and development capabilities.